Autoscaling Applications on Kubernetes - Cluster Autoscaling

Kubernetes Cluster Autoscaler allows you to automatically scale your cluster by adding more nodes. This ensures that the cluster always provides enough capacity to run your applications on.

TL;DR - Kubernetes Cluster Autoscaler allows you to automatically scale your cluster by adding more nodes. This ensures that the cluster always provides enough capacity to run your applications on.

In the first part of the series, I've introduced you to the core concepts of scaling in Kubernetes which consists of two core parts - Application & cluster scaling.

As part of that introduction, we've discussed what the impact is on your application design and how you can manually scale your cluster and application.

In today's article is the second post in our "Autoscaling Applications on Kubernetes" series where we will have a look how you can automatically scale your cluster, how this works and how you can achieve this on Microsoft Azure.

This is the second in our series, here is a full overview:

- Part I - A Primer

- Part II - Cluster Autoscaler

- Part III - Application Autoscaling

- Part IV - Scaling Beyond The Cluster

- Part V - Scaling based on Azure metrics

- Part VI - Autoscaling is not easy

Introducing Cluster Autoscaler

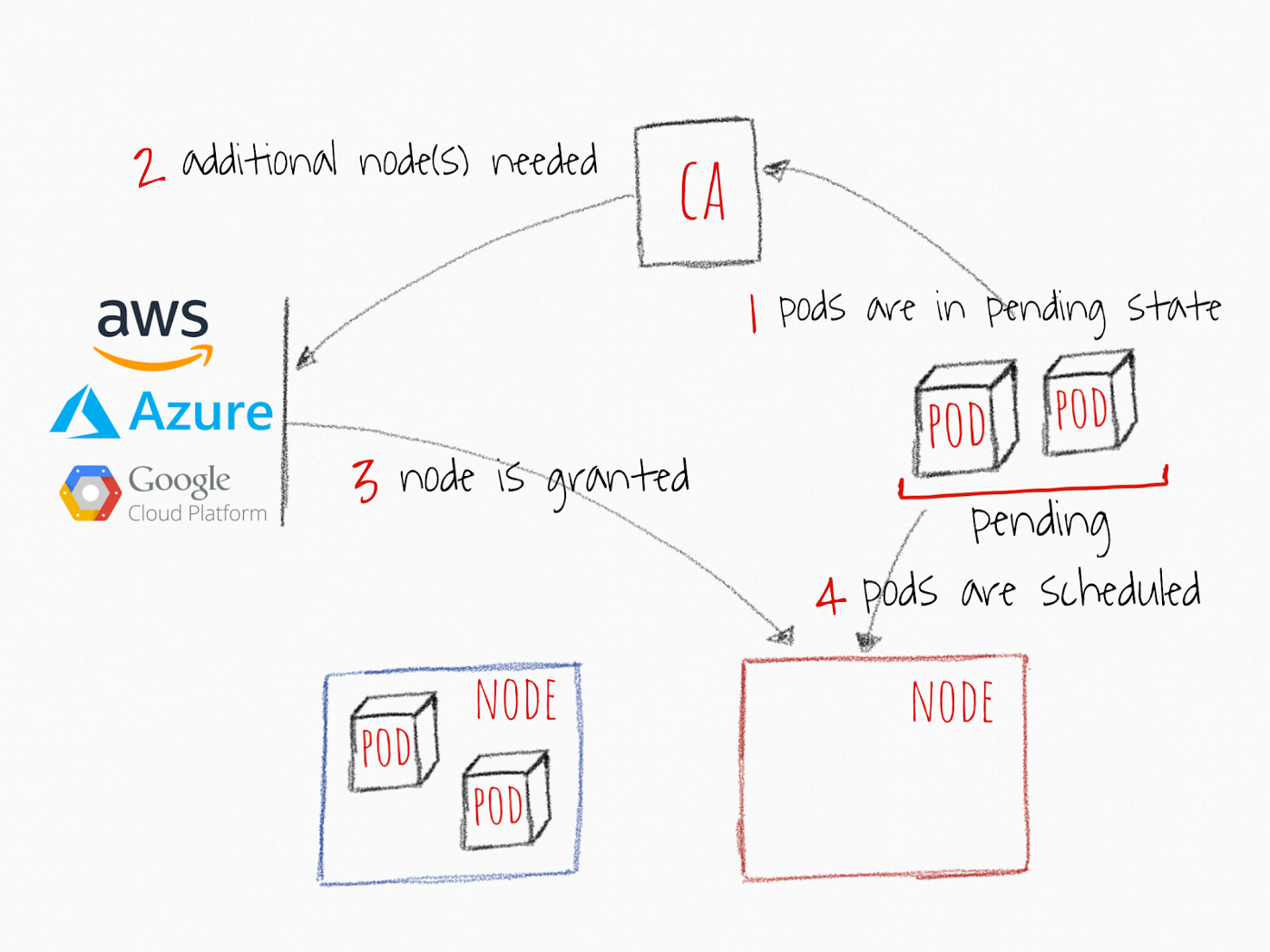

The Cluster Autoscaler (CA) is a tool that is responsible for automatically scaling your cluster. This means that you are no longer in charge of adding nodes, it's all being taken care of.

It will add nodes when the capacity demand is there, but also remove nodes when they are no longer needed. This is very important when you have burst-workloads where you want to fan-out but yet be as cost-efficient as possible.

Cluster Autoscaler has reached General Availability (GA) starting of Kubernetes v1.12! You can read more in the announcement on the Kubernetes blog.

Running Cluster Autoscaler in the cloud

As of recently, you can now use the Cluster Autoscaler with Microsoft Azure to scale your AKS clusters if your cluster is running Kubernetes v1.10.X.

More information on this can be found on the Cluster Autoscaler's official Azure provider docs or in the AKS documentation.

Using another cloud provider? No worries, all cloud providers supporting this are listed here.

Cluster Autoscaler with Azure Kubernetes Service

Microsoft Azure is one of those cloud providers that support Cluster Autoscaler!

Cluster Autoscaler on Azure Kubernetes Service is now in public preview which allows you to start autoscaling your AKS cluster!

In order to use cluster autoscaler, you need to meet the requirements of running AKS with Kubernetes v1.10.X and enabling RBAC on your cluster.

Great! Now we can use the sample YAML specification to deploy it into your cluster via kubectl create.

Once it's up and running you can use kubectl describe on the config map to get the status of your scaling as per this documentation:

$ kubectl -n kube-system describe configmap cluster-autoscaler-status

Name: cluster-autoscaler-status

Namespace: kube-system

Labels: <none>

Annotations: cluster-autoscaler.kubernetes.io/last-updated=2018-07-25 22:59:22.661669494 +0000 UTC

Data

====

status:

----

Cluster-autoscaler status at 2018-07-25 22:59:22.661669494 +0000 UTC:

Cluster-wide:

Health: Healthy (ready=1 unready=0 notStarted=0 longNotStarted=0 registered=1 longUnregistered=0)

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

ScaleUp: NoActivity (ready=1 registered=1)

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

ScaleDown: NoCandidates (candidates=0)

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

NodeGroups:

Name: nodepool1

Health: Healthy (ready=1 unready=0 notStarted=0 longNotStarted=0 registered=1 longUnregistered=0 cloudProviderTarget=1 (minSize=1, maxSize=5))

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

ScaleUp: NoActivity (ready=1 cloudProviderTarget=1)

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

ScaleDown: NoCandidates (candidates=0)

LastProbeTime: 2018-07-25 22:59:22.067828801 +0000 UTC

LastTransitionTime: 2018-07-25 00:38:36.41372897 +0000 UTC

This provides some minimal insights into how your cluster is doing, but you need to go and check which is a bit of a pity.

While this is a good start, there is still some streamlining required:

- No Azure Helm chart - Helm is the Kubernetes package manager that allows you to deploy "recipe", aka a chart, into your own cluster. By using a Helm chart we could easily deploy this in our cluster. However, there is one for AWS.

- Azure Portal support - Alternatively it would be great to enable this via the Azure Portal which would deploy all the required resouces in my cluster

If you want to have Azure Portal support, go vote for it!

How about autoscaling my cluster with Azure Monitor Autoscale?

Unfortunately, you cannot do that as of today.

A year ago I wrote an article about how you can use Azure Monitor Autoscale automatically scale your application based on a specific metric and how it provides you with observability on what is going on.

It would be great to use this same experience for scaling my Azure Kubernetes Service cluster so that I can consolidate all my autoscaling rules for all my Azure resources in one central place.

Another benefit would be that it would provide observability in what is going on - This is essential when running a platform! While Kubernetes uses an event approach, there is no easy way to get those to trigger another process to, for example, post a message on Slack.

Are you interested in this feature as well? Feel free to vote for it on UserVoice!

Conclusion

Cluster Autoscaler is a great way of automatically adding/removing nodes to your cluster and is fairly easy to use, certainly if you are using a cloud provider.

However, it would be great if Azure Monitor Autoscale would have support for Azure Kubernetes Service. That would allow us to choose to run everything inside our cluster or delegate this to Azure Monitor to streamline our autoscaling approach with other Azure resources.

Now that we know how we can ensure our cluster can deliver the resources that we need, we can start automatically scaling our applications.

But that's for our next article in this series!

Thanks for reading,

Tom.